Check out our White Paper Series!

A complete library of helpful advice and survival guides for every aspect of system monitoring and control.

1-800-693-0351

Have a specific question? Ask our team of expert engineers and get a specific answer!

Sign up for the next DPS Factory Training!

Whether you're new to our equipment or you've used it for years, DPS factory training is the best way to get more from your monitoring.

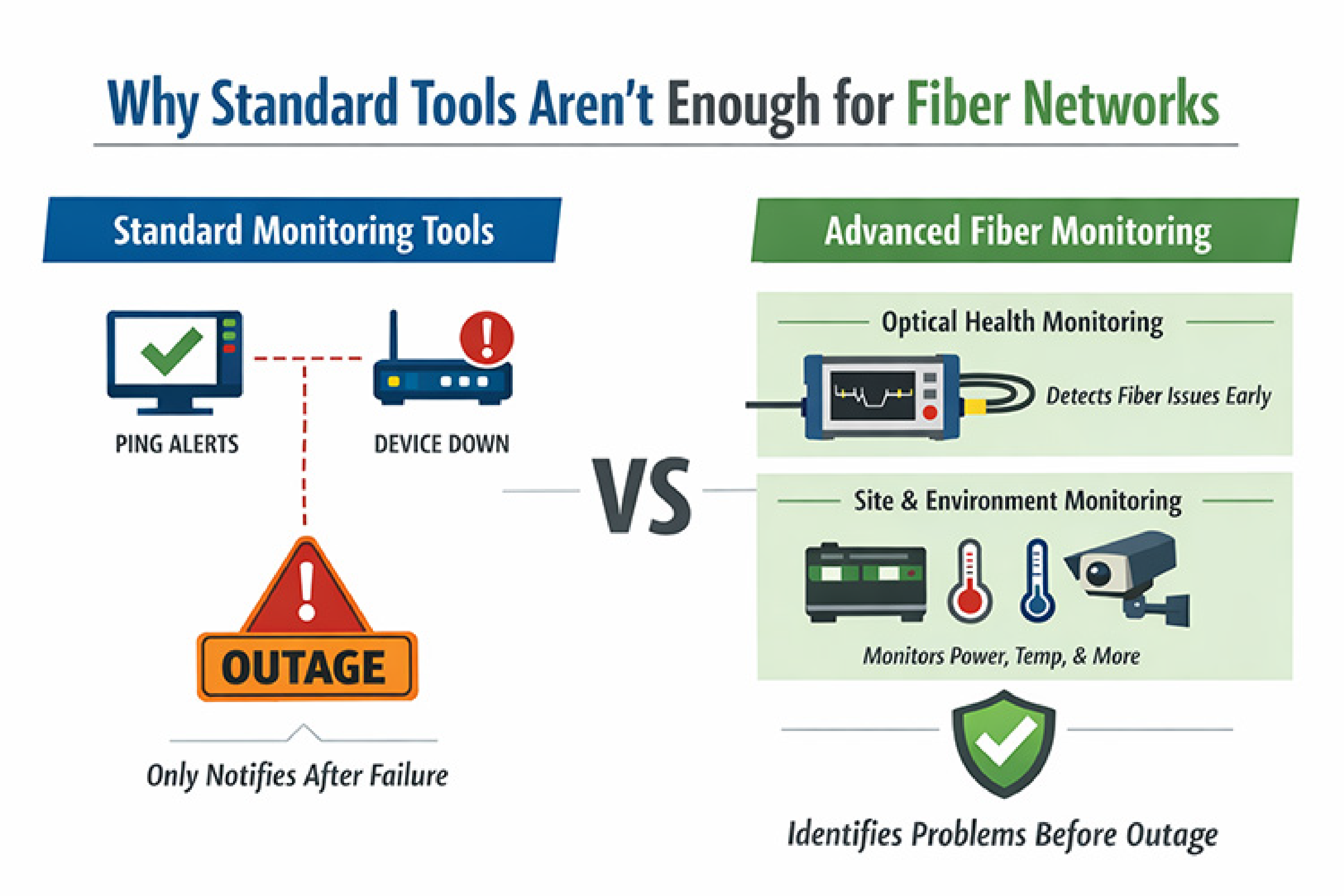

Reserve Your Seat TodayOne of the most common questions we get from fiber operators is some version of this: "We already have tools that tell us when something is down. Why do we need a dedicated monitoring system on top of that?"

The answer is that fiber networks fail in ways that standard tools were never designed to detect. Light stays inside the glass during normal operation, which means a fiber degrading toward failure looks exactly the same as a healthy one from the outside. By the time your existing tools notice a problem, you already have an outage.

At DPS Telecom, we have spent nearly four decades helping telecom operators, utilities, and ISPs build monitoring systems for distributed networks. With more than 172,000 deployed monitoring devices across more than 1,500 organizations worldwide, we have seen most of the ways fiber monitoring can go right and wrong. This guide reflects what we have learned about choosing equipment that actually works in the field, not just in a vendor's demo.

When clients first come to us, they often have some monitoring in place. They can ping devices. They get alerts when a router goes dark. What they usually cannot see is the layer beneath that, and that is where most fiber outages actually originate.

Fiber monitoring involves two separate but complementary problem sets, and you need to address both.

Optical health monitoring uses instruments like Optical Time Domain Reflectometers (OTDRs) to measure splice loss, verify fiber length, and locate faults. The Fiber Optic Association (FOA) describes the OTDR as useful for testing fiber optic cable integrity, including splice verification, length measurement, and fault location. In a remote monitoring deployment, those OTDRs shift from handheld field instruments to fixed, rack-mounted units that test many fibers automatically using optical switching.

Site and infrastructure telemetry covers everything the OTDR cannot see: cabinet power, battery voltage, temperature, humidity, door access, smoke detection, and IP reachability of network elements. In our experience, non-optical failures cause a significant share of outages at remote sites. A cabinet loses power at 2 a.m. The OTDR shows the fiber is fine because the fiber is fine. But if your generator has been running dry for six hours and your batteries are at 15%, you have a problem that no optical instrument will catch. A NetGuardian RTU at that site, monitoring discrete alarms and battery voltage, would have notified your NOC long before things got critical.

We built DPS around that second layer because we saw early on that optical test equipment alone left operators with a false sense of coverage. The most effective monitoring strategy addresses both layers and connects them into a single operational workflow.

Before you look at a single spec sheet, write down your monitoring objectives. This sounds basic, but it is the step we see skipped most often, and it is where mismatches happen. Here is how different objectives point to different equipment.

If your primary goal is knowing exactly where a fiber break is so you can dispatch the right crew with the right equipment, you are looking for a fixed OTDR-based platform. These systems run continuous or scheduled sweeps and compare current traces against baseline measurements, pinpointing breaks and degradations automatically without requiring a technician in the field first. Getting that right-person, right-equipment dispatch is what separates a two-hour restoration from a six-hour one.

Some operators we work with are less concerned about responding fast to failures and more focused on preventing them altogether. Slowly worsening splice loss, increasing reflectance, or intermittent flapping events can signal trouble weeks or months before a hard failure appears. VIAVI's ONMSi RFTS brochure describes a system that scans the network continuously to trend degradation and send alarms without requiring field technician dispatch. If this is your goal, you need a system that supports continuous scanning and long-term trending, not just event-based alerting.

Passive optical networks add a layer of complexity that catches a lot of operators off guard. The Fiber Broadband Association explains that each doubling of split ratio reduces power by roughly 3 dB. A 1x32 split means approximately 15 dB of splitting loss before you have even accounted for fiber attenuation, connectors, and splices. If your OTDR does not have sufficient dynamic range and PON-capable test methods, you will have blind spots beyond the splitter that you may not discover until a client calls to report an outage.

If you need to monitor fibers that are actively carrying traffic, you need in-service capability. Test wavelengths, typically 1625 or 1650 nm, are injected in a way that does not interfere with traffic. A VIAVI white paper identifies the most common reasons operators pursue this: no available dark fibers, leased fiber where they lack physical access, and security requirements around data confidentiality. If any of those apply to you, in-service monitoring is not optional.

For operators whose real goal is end-to-end service availability, optical monitoring alone will not get you there. Power failures, overheating cabinets, and unreachable IP nodes all cause outages that no OTDR will detect. This is where remote telemetry units fit in. They collect discrete and analog alarms, perform reachability checks, and forward events to your NOC. At DPS Telecom, building this layer is what we have spent the better part of four decades getting right.

Most operators end up using some combination of these approaches. Knowing what each one does, and what it does not do, is what keeps you from buying the wrong system.

| Monitoring Approach | What It Does | Strengths | Key Tradeoffs | Best Fit |

|---|---|---|---|---|

| Fixed OTDR + Optical Switching (RFTS) | Rack-mounted OTDRs at strategic locations, testing many fibers automatically | Rapid fault localization, automated testing, supports P2P and PON | Needs correct dynamic range; requires baseline trace governance | Metro/core routes, FTTx, fiber-to-wireless, data center interconnect |

| In-Service OTDR Filtering | Filters/WDM couplers inject test wavelengths while filtering traffic wavelengths | Enables monitoring on active fibers without interrupting service | Adds insertion loss; Raman scattering can reduce OTDR dynamic range | Networks with few/no dark fibers, leased fiber, security-motivated monitoring |

| Distributed Fiber Sensing (DTS/DAS) | Uses scattering techniques to infer temperature/strain along fiber | Detects temperature and strain changes for early warning | Higher complexity and cost; sensing resolution must be validated | Pipelines, utilities, perimeter monitoring |

| Site Telemetry RTU / Alarm Collector | Collects discrete/analog alarms, performs reachability checks, forwards events via SNMP | Covers non-optical root causes: power, temperature, door/security, IP reachability | Does not locate fiber faults by itself; requires good alarm engineering | Remote huts/cabinets, OLT/POP sites, any distributed site where environmental or power issues cause outages |

The first three categories address the optical plant. The fourth addresses everything else. Both are necessary for a complete picture of network health.

This is the part of the conversation where a lot of monitoring projects go wrong. A system that performs well in a vendor's demo can fail in your actual plant if it has not been dimensioned properly for your specific conditions. Here is how we walk through this with clients.

The single most common mistake we see is operators selecting an OTDR based on marketing dynamic range figures, then discovering in the field that it cannot see through their splitter architecture. Dynamic range on a spec sheet does not account for your actual connector quality, fiber plant age, or splitter configuration.

The FOA recommends building loss budgets from component-level values. Typical planning figures include roughly 0.3 dB per connector and approximately 0.15 dB per single-mode fusion splice. Match your fiber attenuation assumptions to your deployed fiber type. A Prysmian G.652.D datasheet, for example, lists attenuation limits of 0.35 dB/km at 1310 nm and 0.21 dB/km at 1550 nm. Build that budget from the ground up, then check OTDR dynamic range, distance range, event dead zone, and attenuation dead zone against your actual longest links and densest event spacing.

Avoid buying a system that works at a small scale but hits a wall when you try to deploy it across your full network. Confirm the platform supports your topology (point-to-point, PON, or both) and can reach your full port count. We have seen operators purchase systems that handled their pilot sites well and then discovered the platform's scaling limits six months into a broader rollout.

If you need to monitor lit fibers, do not take vendor assurances at face value. Validate three things explicitly:

One pattern we see repeatedly is operators who invest heavily in the monitoring hardware and treat integration as something they will figure out later. Later usually means alarms that never make it into the ticketing system, or NOC staff who stop trusting alerts because the workflow is too fragmented to act on.

SNMP is the standard protocol for telemetry and alarm forwarding. The SNMP management framework, described in RFC 3411, outlines the manager/agent model that underpins how monitoring devices report to central management systems. Some fiber test platforms also offer REST APIs and standard trace formats (Telcordia .sor) for integrating measurements into OSS workflows, which matters when you want to attach OTDR measurements to trouble tickets or correlate them with GIS data.

Remote monitoring gear lives in critical infrastructure. NIST SP 800-82r3 emphasizes that operational technology has unique reliability and safety requirements and recommends a risk-based assessment approach. If you are using SNMP, SNMPv3 is the right choice for critical infrastructure, providing message integrity and user authentication per RFC 3414. Build security into the architecture from the start.

Over the years, we have developed a selection process that works consistently across telecom, ISP, and utility operators. It runs in two passes: optical fiber health monitoring first, then supporting infrastructure and site telemetry. Then both integrate into your operations workflow.

The first question we ask is: what does success look like for your team? "Pinpoint fiber cuts fast" translates to a fixed OTDR + switching solution that continuously tests and compares to baselines. "Detect degradations before they become outages" translates to a system that supports continuous scanning and long-term trending. Vague goals produce systems that cannot prove their own value.

For PON networks, use the 3 dB-per-doubling rule to estimate splitter impact, add fiber attenuation and event losses, and determine your monitoring reach requirements before you open a spec sheet. For lit fibers, require explicit in-service wavelength plans and ask vendors to walk through specifically how they handle Raman/SRS interactions for your topology.

Standardized trace formats (.sor) and open APIs are what allow measurements to flow into tickets and correlate with network alarms. Plan your SNMP strategy up front, including MIB planning and security model choices. If you cannot articulate how an alarm gets from a sensor to an engineer's screen before you purchase the equipment, you are not ready to purchase yet.

This is the step most fiber monitoring projects skip, and it is usually where the gaps show up after deployment.

OTDR-based platforms tell you about fiber health. But if you operate remote cabinets, OLT sites, radio huts, or any distributed network element, you also need to know what is happening at those sites physically. Power, temperature, door access, IP reachability. That is the layer our NetGuardian RTUs are purpose-built for.

The NetGuardian 832A, for example, supports 32 discrete alarms (expandable to 176), 32 ping alarms, 8 analog inputs, 8 controls, and 8 serial ports, with dual Ethernet separation for management network segmentation. For operators who need centralized alarm consolidation at scale, our T/Mon NOC platform supports up to 1 million alarm points across 25+ protocols, with full alarm history logging and web access. T/Mon can pull in alarms from your OTDR-based RFTS alongside site telemetry from NetGuardian RTUs, so your NOC sees fiber health and infrastructure status in a single view rather than toggling between disconnected systems.

The point is not to choose between optical monitoring and site telemetry. They address different failure modes. Combining them is what gives you real coverage.

Do not validate a system against your easiest sites. Test against your largest split ratio, your longest route, your most congested cabinet, and at least one lit-fiber segment. Test the alarm workflow end-to-end: from sensor trigger to NOC notification. What works in a lab often behaves differently under real-world conditions, and a pilot is far less expensive than a full deployment that underperforms.

Ignoring non-optical failure modes. An OTDR can confirm the fiber is intact. It cannot tell you the cabinet lost commercial power six hours ago and is now running on backup batteries at 15%. Many outages we have helped investigate traced back to power, temperature, or access failures at remote sites, all of which a properly deployed RTU would have caught.

Trusting spec sheet dynamic range without validating against your plant. The number on the sheet does not account for your specific splitter architecture, connector condition, or fiber plant age. Build the loss budget first, then check the spec.

Treating integration as something to figure out later. If your monitoring system generates alarms that do not flow into your existing ticketing and escalation processes, your team will stop trusting them. We have seen this happen. The fix is always more expensive than designing it right the first time.

Skipping baseline trace governance. The FOA notes that OTDRs are commonly used to create a baseline picture of newly installed fiber, and that later comparisons identify degradation. Without maintained baselines, your RFTS cannot do its job. Comparison requires a reference.

Underestimating PON monitoring complexity. A 1x32 splitter consumes roughly 15 dB of your power budget before fiber attenuation enters the picture. If you have not accounted for this in your dynamic range requirements, you will have blind spots beyond the splitter that will not surface until a client reports service down.

Your network is unique. The right monitoring strategy depends on your topology, your operational model, and what you are actually trying to protect. We never recommend a generic solution to these questions because generic solutions tend to create the exact blind spots that lead to outages.

What we can tell you from nearly four decades of working on this problem is that the operators who get the best results are the ones who pair optical fiber monitoring with field-proven site telemetry and route everything into a unified alarm management workflow. When that infrastructure is in place, your NOC team stops reacting to outages and starts preventing them.

If you are working through a fiber monitoring decision and want to talk through how site telemetry fits into your architecture, reach out to our engineering team for a free consultation. We will ask the right questions, listen to what your network actually needs, and help you design a custom-fit solution built around your specific requirements.

Andrew Erickson

Andrew Erickson is an Application Engineer at DPS Telecom, a manufacturer of semi-custom remote alarm monitoring systems based in Fresno, California. Andrew brings more than 19 years of experience building site monitoring solutions, developing intuitive user interfaces and documentation, and opt...